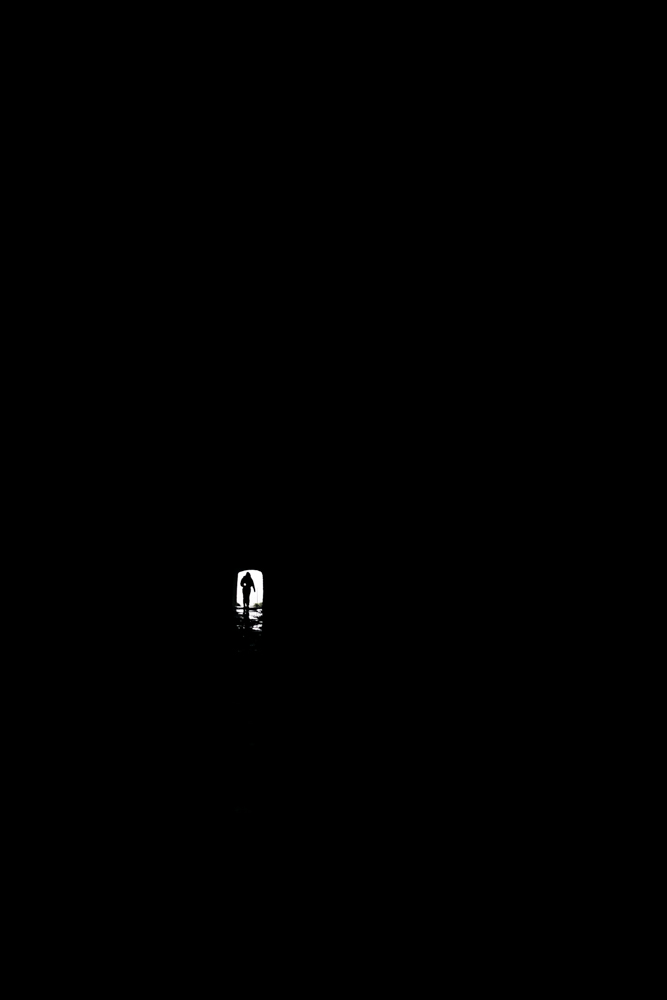

The Inevitability of Triviality

Triviale Maschinen haben nur einen Zustand: Sie liefern auf denselben Input immer den gleichen Output.

Heinz von Förster

The quote could be vaguely transcribed as ‘Trivial machines have a single state: Given the same input, they always produce the same output.’ In contrast, for non-trivial systems the output not only depends on the input but additionally on an inner, possibly unknown, state of the system. This inner state evolves with every given input and, thus, the same input can lead to different output. In other words: the output may seem random to an observer as it also relies on the complete history of inputs processed by the system, reflected by its internal state.

Using this bipolar framework to describe actual systems can be challenging: When I type 2+2 in my calculator it will always yield 4. It’s apparently trivial – until the environment acts upon it and the batteries run out, the circuit board becomes corrupt, or the display breaks. If my bike would always give the same output when I start pedaling, I would be much more satisfied and my local bike shop would go out of business. If computers really were trivial, a whole lot of IT assistants could look for a new job right now. Systems decay over time, they are error prone, they are subjected to the very same universe we are.

Another approach might be to not consider it as a binary decision, but a continuous scale of triviality where systems are ranked based on their robustness. In a probabilistic sense, the calculator is rather trivial as it gives the predicted output in a quantifiably large majority of cases. In contrast, living systems are on the other end of the scale and highly non-trivial since they exhibit wildly different behaviour in seemingly similar situations.

However, when system are ranked on such a scale of non-triviality, problems arise: How should I work on this very laptop when I assume that it could fail me anytime? If I would admit its non-triviality, I couldn’t work in the first place because it could give any output, independent from the keys I am pressing. This example seems a little daft, but when transferring it to human interactions, the exact same applies: How should I communicate with my colleague about work issues when assuming that the output will be determined by a non-trivial living system? How should I forward instructions if the output is uncertain anyway? How could I coherently speak with my partner about serious topics when my input has potentially little effect on the output?

We constantly trivialize the non-triviality around us. We do so because it is necessary. When I am typing in my calculator I expect a correct result. When I am asking a question to a friend, I expect to get an answer. Not because the answering system is trivial, but because I have to assume it is in order to ask the question in the first place. We trivialize machines, we trivialize humans, the reactions of strangers, friends, and partners. And if the output is unexpected, we don’t blame our foolish assumption of triviality, but we blame the system itself. And the scale isn’t really one that describes the non-triviality of systems, but rather a scale of how much an observer trivializes systems.

Where does this lead? Potentially nowhere; there might be other, potentially more useful, distinctions to draw. But when drawing this distinction, I am wondering in which cases it might be wise to begin to acknowledge the non-triviality of systems.